A Resource Pool (RP) is a powerful but also danger tool when used not properly. RPs are not folders! and they require the correct design phase. Some years ago, I wrote an article on basics and please follow it if you are not familiar with RPs.

I needed to use RPs to address some internal requirements:

- divide cluster resource equally - eight teams

- help to right-sizing of VMs

- limit powered on, unused VMs

To address above requirements I created eight siblings resource pools with below parameters:

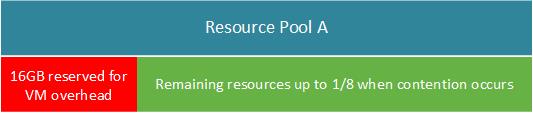

- Memory reservation at 16GB each, no CPU reservation - both not expendable

- No CPU and MEM limit

- All RPs with the same priority - Normal

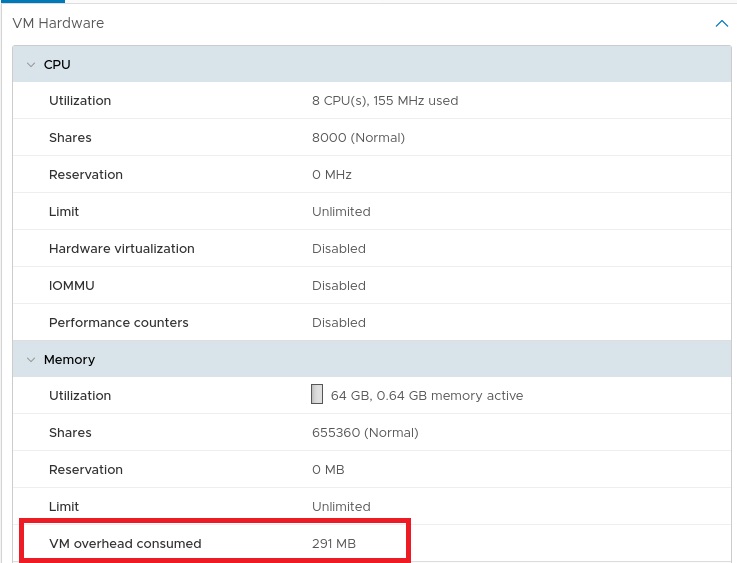

Why 16GB memory reservation? I configured CPU/MEM reservation as not expendable and to allow folks to power on VMs I had to set a static MEM reservation for VM overhead. ESX host consumes some RAM additionally to the current usage of its configured memory.

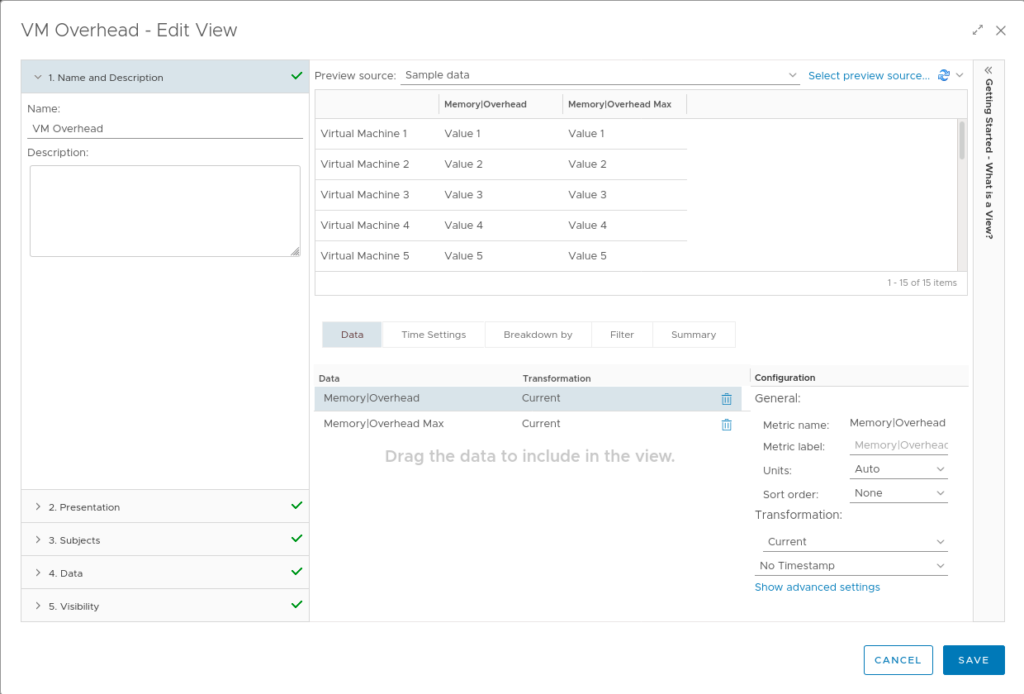

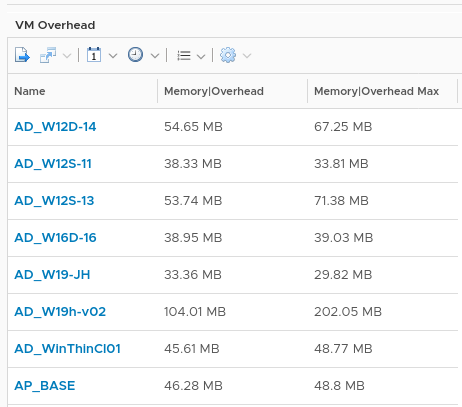

The vROps view can be useful to investigate the proper amount of reservation and to right-size all VMs e.g. every three months. To check VM overhead on powered on VMs, just create a new View as a list and metrics: Memory|Ovehead and Memory|Overhead Max.

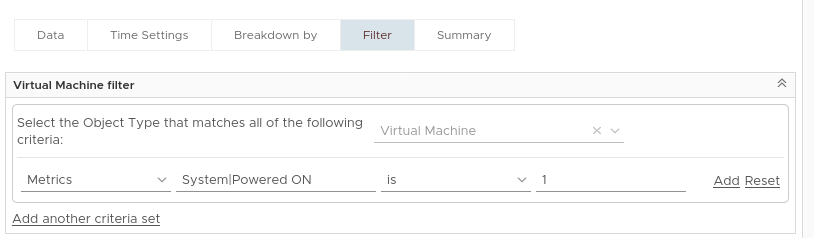

To list only powered on VMs, add a filter:

Apply view on folder, cluster or just resource pool:

To limit deploying VMs with reservation (e.g. vendor appliance often are deployed with CPU and MEM reservation) I disabled the reservation expandable option on each RP. Furthermore, this trick should also help to limit oversized and powered on VMs (often not used) because those VMs consume more overhead which is limited up to 16GB.

The rest configuration is pretty simple - no CPU/MEM limit per RP then all resources most of cluster resource are available until contention when hypervisor will share equally.

vSphere 7.0 introduced new feature: Scalable Shares where the number of shares would be determined by the number of VMs and the priority of the pool. Not useful in my case above.